Measuring sample variability: uBiome BioRxiv paper

Scientists at uBiome recently released results from experiments testing the variability of gut samples. My own experiments show considerable day-to-day variability, so I was interested to see their conclusions, which are based on much more rigorous testing.

The preprint, titled Measures of reproducibility in sampling and laboratory processing methods in high-throughput microbiome analysis finds these high-level results:

Sampling method isn’t that important. Gut stool is not homogeneous, so you’d expect some variation in abundances depending on where and how you wipe, but when they systematically tested one person 11 times, they found the differences from the same day were small. Samples taken the same day were 0.95+ correlated; those taken from the same individual on different days were 0.60+ correlated – much higher than the correlation between different people.

Storage conditions don’t matter (much) either Whether you store the samples frozen, at room temperature, or in hot weather, your results won’t be different enough to make them invalid.

Sequencing results are pretty consistent. Turning a microbiome gut sample into usable data requires dozens of precise steps, any of which can potentially skew the results, but at least in uBiome’s lab pipeline, the final results are highly reproducible.

All of this is good news to people hoping for important insights from their microbiome testing, but it still left me with some questions.

The paper doesn’t describe exactly how they tested the person (“Subject A”) who they found had consistent results over time. This is an experiment I’ve tried too – over 25 samples worth – and meanwhile several people have sent me the results where they happened to test twice. Can I replicate the uBiome results?

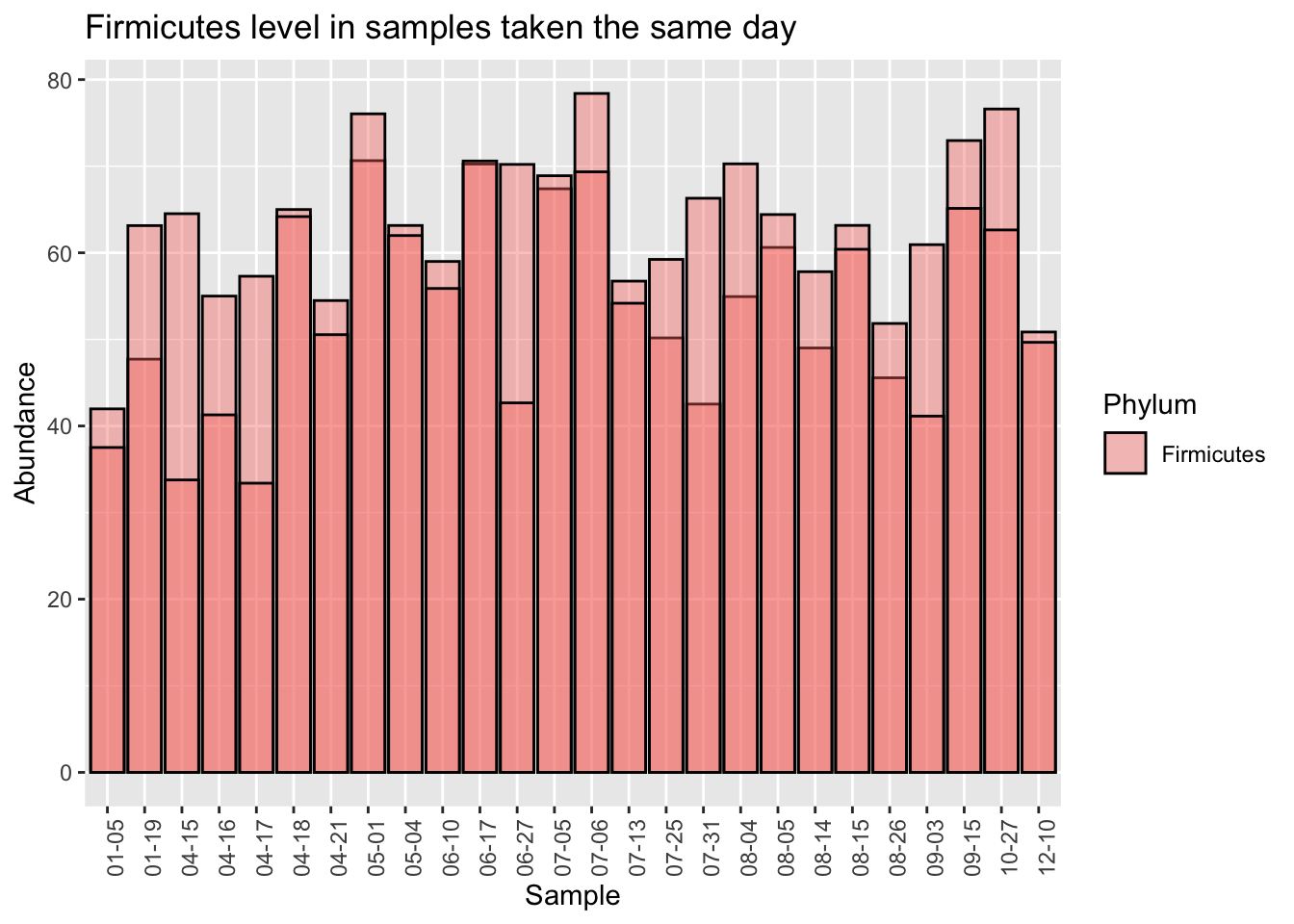

Let’s start by looking at a single Phylum, Firmicutes, which is usually the most common in western guts. This is the highest-level taxanomical ranking as well, so the 16S method used in the uBiome pipeline should be pretty accurate. Using the 25 samples of which I have duplicates taken the same day, I’ll compare the first sampling (“Sample1”) with the second “(Sample2)”.

When we eyeball it, the Firmicutes doesn’t appear to vary a whole lot between the same-day samples. The black lines in each of the bars is the level of Firmicutes found in the second sample. Although there are a few significant exceptions (04-15, 06-27, 07-31), most of the time the levels for this microbe seem pretty close no matter where you sample.

One limitation of the uBiome paper is that they only looked at a tiny subset of all the genus-level taxa found in the sample. Presumably they did this because they’ve previously shown that those particular genera are accurately represented in the sample, but if you want to know if something is evenly distributed, you can’t rely on a subset. For example, even though a subset of my gut phylum, Firmicutes, is reasonably stable all on its own, the ratio of Firmicutes to other important taxa is all over the place.

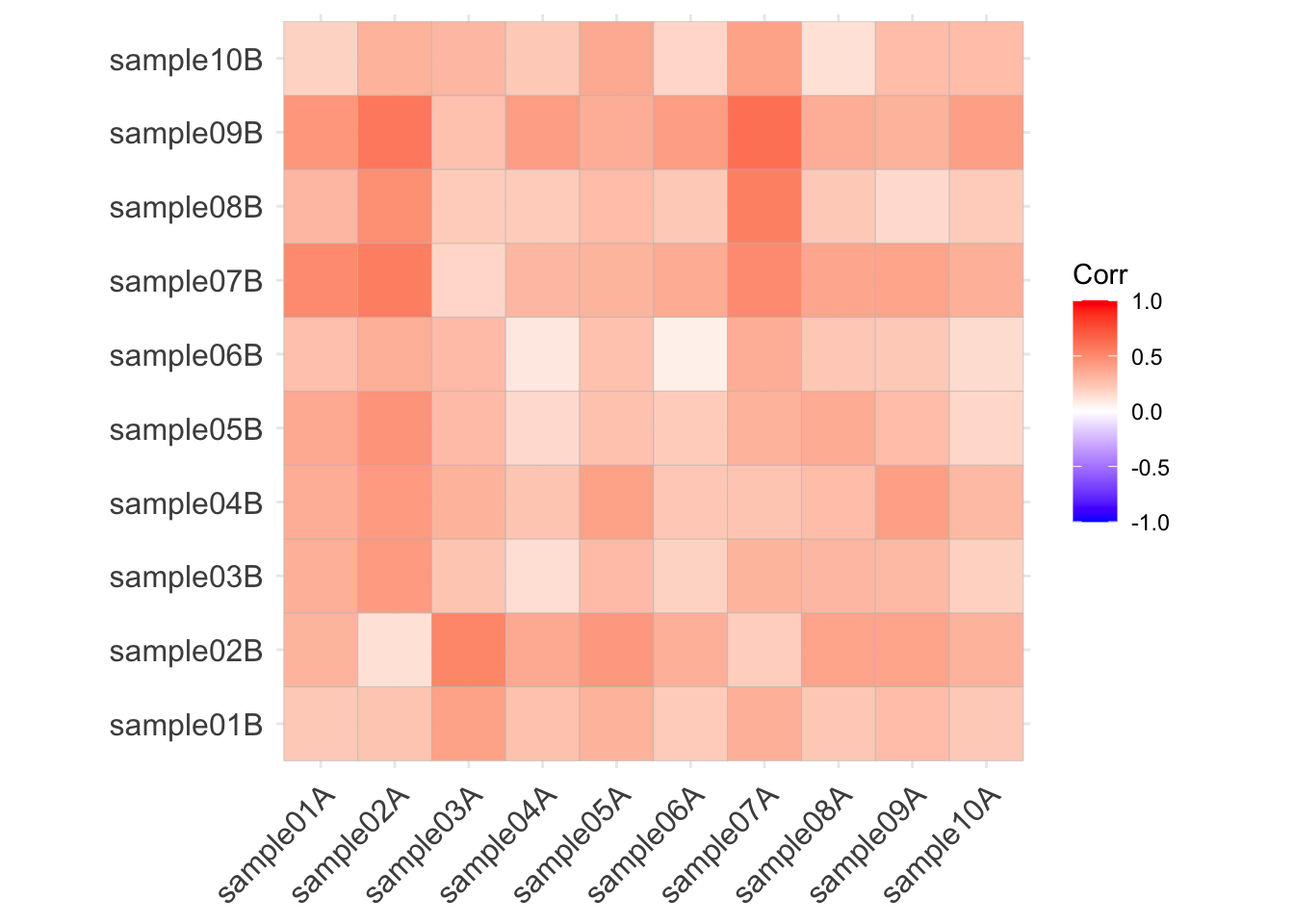

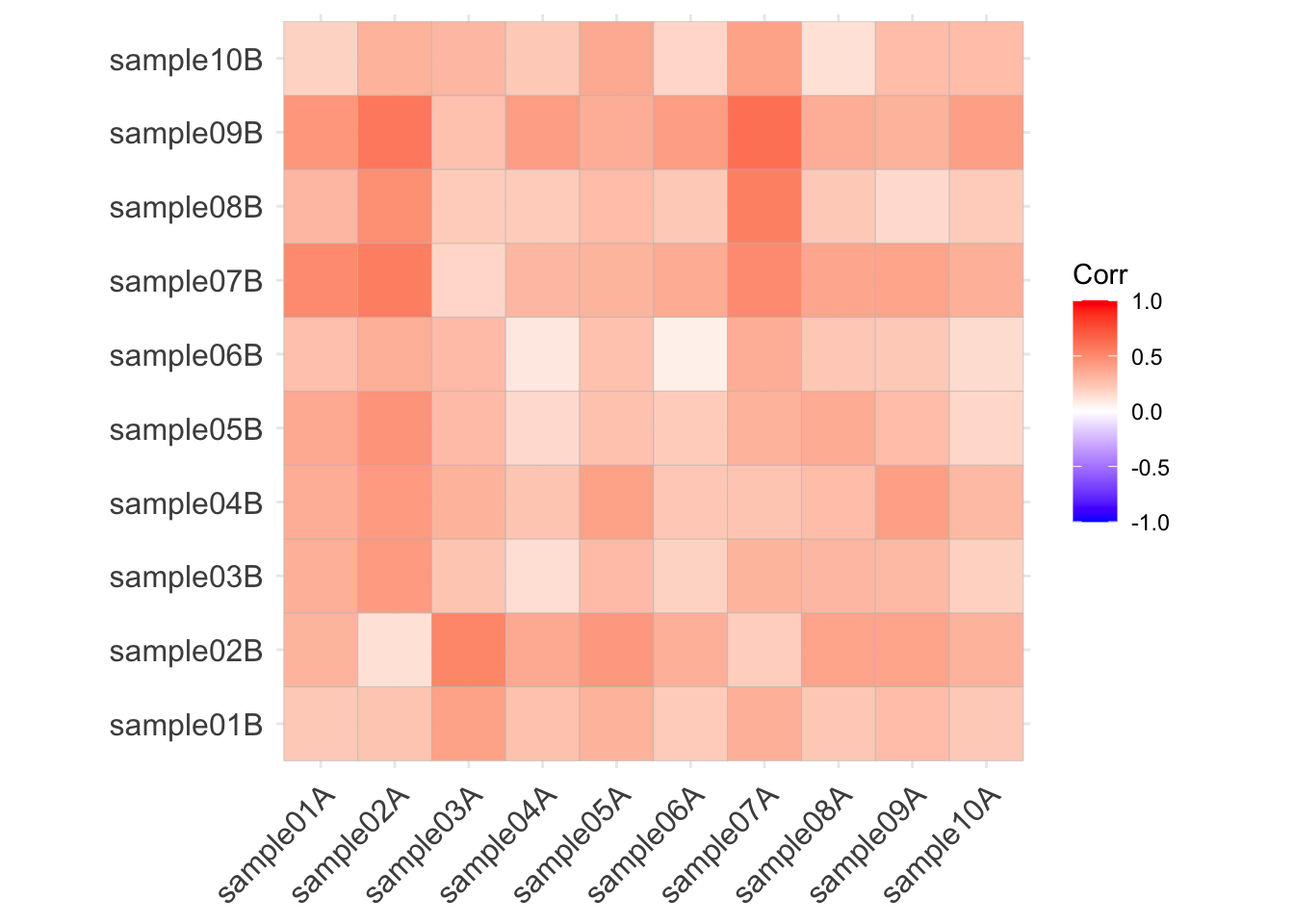

Let’s run the same correlation calculation that uBiome used:

At the phylum level, I find much less correlation (at best 0.50) than uBiome did (0.95). What are some possible reasons?

First, as noted they are looking at a subset of 28 taxa that they’ve decided can be most accurately detected using their pipeline. I’m looking only at one phylum. But Firmicutes is the most important, most broadly watched phylum in the gut. If this is measured inaccurately, what does that say about the rest of the experiments?

Second, although I’m studying only a single taxa, they’re using a summary metric of all 28 taxa they measure. The paper doesn’t explain how they summarize 28 microbial abundances into a single number, but I assume they are doing some common similarity metric, like Bray-Curtis. This is a simple and often-used way to tell how similar or different two vectors are from one another. I didn’t do that because I’m comparing a single number, not a vector.

Let’s see if I can make a rough estimate that would be similar to their list of taxa. uBiome’s clinical test tracks 28 microbes at the genus and species level, not all of which can be seen in Explorer.

There are 12 genus-level taxa on both lists (str_subset(taxa_names(me.gut.dupes),str_c(clinical_genus, collapse = "|")))

Here are the correlations between the clinical taxa

Here are the correlations among the Bray-Curtis distances:

(which, upon reflection, means absolutely nothing)

Finally, I think the real difference has to do with sampling technique. I poke the swab all over the place into my samples. My guess is that their experimental subject probably swabbed the outside of the sample at two spots. That may or may not be more realistic than my method – it depends on whether you think toilet paper grabs only the outside or not – but it does highlight the importance of consistency in how you take a sample. If, as the uBiome experiments appear to show, you sample only on the outside, then there is probably a lot of similarity in the same sample. If normal people are more like me, sampling all over the place, then my results show the variability may be much higher than uBiome thinks.

Disclaimer: Although I am a former “citizen scientist in residence” at uBiome and have many friends there, I was not involved in this research, nor do I have any current relationship with the company.